Enterprise AI Security Built for Modern Enterprises

Secure AI for enterprises with dedicated tenants, strict access controls, auditability, and zero data retention at the LLM layer — built for compliance from day one.

Trusted by enterprises

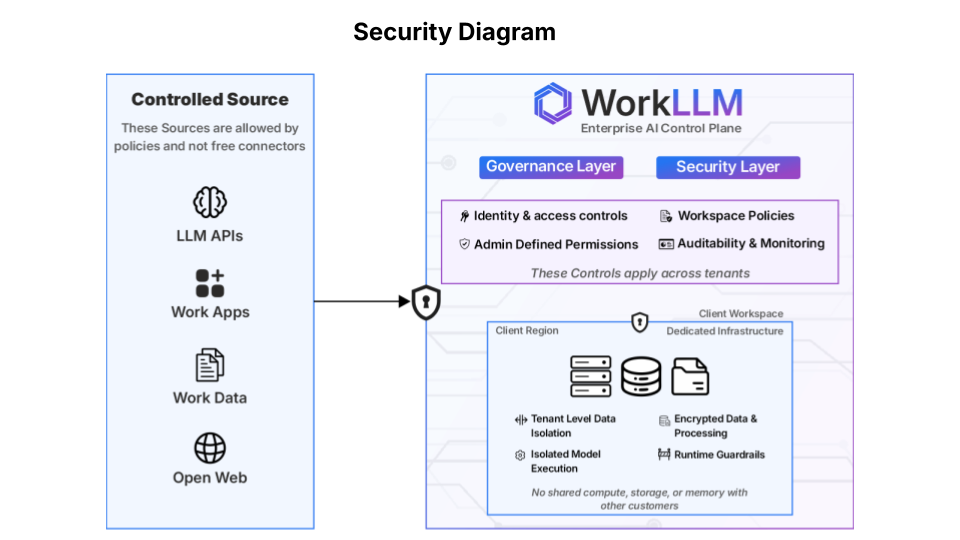

Our Enterprise AI Security Approach

Our enterprise AI security approach guides every architectural and governance decision across the platform.

Built for Enterprise Trust

Least Privilege Access

Accountability, Not Assumption

Enterprise Transparency

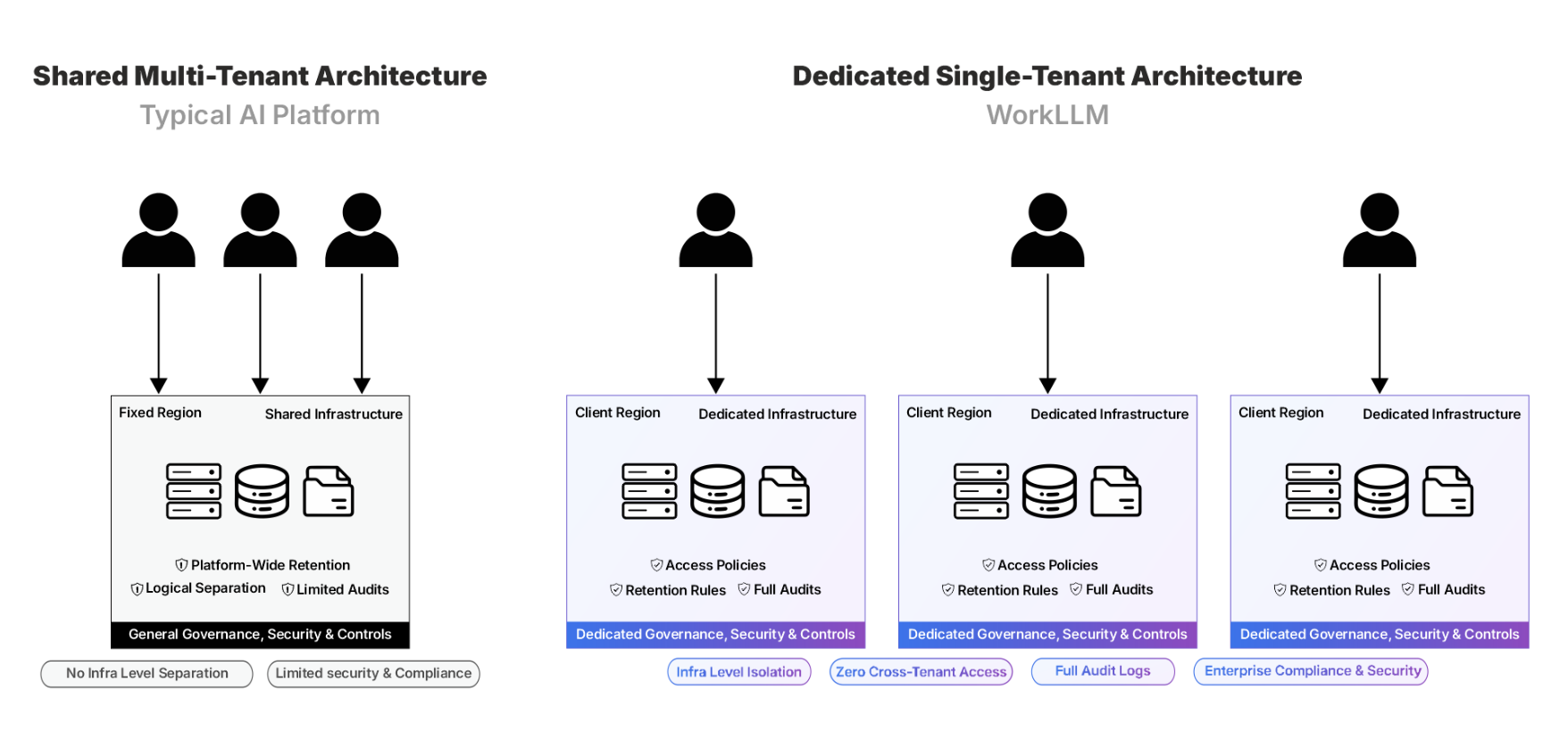

Dedicated Tenant (Cloud or On-Premise)

Every WorkLLM customer is provisioned into a dedicated cloud or on-premise tenant. This ensures strict isolation, predictable performance, and enterprise-grade security boundaries. Isolation is enforced at both the infrastructure and application layers. This architecture is foundational to secure AI for enterprises operating in regulated or high-compliance environments.

What This Enables?

- No Cross-Tenant Exposure — Customer data never mixes with other tenants, by design.

- Independent Auditability — Each workspace maintains its own activity logs and governance trail.

- Enterprise Readiness — Simplifies security reviews and supports regulated and high-compliance environments.

- Deployment Flexibility — Meet internal IT, regulatory, or data residency requirements with cloud or on-premise options.

What's Isolated?

- Customer Data — Conversations, documents, and metadata are isolated within each customer’s tenant.

- AI Context & Embeddings — Vector stores and retrieval are scoped per tenant to prevent cross-workspace context leakage.

- Access & Permissions — Authentication rules and role-based access controls are enforced independently per workspace.

- Infrastructure Boundary — Each deployment runs in a fully isolated environment, whether in WorkLLM’s cloud or within your own infrastructure.

Enterprise AI Security & Compliance Capabilities

As an AI governance platform, WorkLLM combines infrastructure isolation, policy enforcement, and operational transparency.

Encryption At Rest & Transit

All customer data is encrypted at rest and protected in transit using modern industry standards to prevent unauthorized access.

Role-Based Access Control (RBAC)

Granular permissions ensure users and services only access what they’re authorized to — nothing more.

SSO & Authentication

Secure authentication with optional SAML-based SSO support for enterprise deployments.

Audit Logs & Activity Tracking

All meaningful actions are logged within each workspace to support compliance, investigations, and internal reviews.

Input & Output Guardrails

Automatically redact sensitive data, enforce prompt restrictions, and maintain safe output policies across the workspace.

No Training On Customer Data

Customer data is never used to train models and is processed transiently for inference only.

Zero Data Retention At the LLM Layer

Requests to the LLM providers are not retained by them, providing zero data retention at the LLM layer.

AI Governance Platform with Full Admin Control

Workspace admins control integrations, sharing, usage visibility, and access revocation from a central dashboard. Enterprise AI security requires continuous oversight, which is why all activity is logged, auditable, and permission-scoped.

FAQs

Is our data isolated from other customers?

Yes. Every WorkLLM customer is provisioned into a dedicated cloud tenant. Data, AI context, embeddings, and access controls are isolated per tenant to prevent any cross-customer exposure.

Does WorkLLM use our data to train AI models?

No. Customer data is never used to train models. Prompts are processed only for inference and are not retained for training purposes.

What does “zero data retention at the LLM layer” mean?

Requests to the LLM providers are processed transiently at the model layer and are not retained, providing zero data retention at the LLM layer.

Where is our data stored?

Customer data is stored securely within your dedicated tenant, isolated from other organizations and accessible only to your workspace.

What access controls are available?

WorkLLM supports role-based access control (RBAC), allowing administrators to define permissions across users, assistants, agents, and integrations.

Are users actions auditable?

Yes. All meaningful actions — including access changes, configuration updates, and data usage — are logged and available to workspace administrators.

How is data encrypted?

All customer data is encrypted at rest and protected in transit using modern, industry-standard encryption protocols.

How does WorkLLM prevent unauthorized access?

Access is controlled through authentication, role-based permissions, session management, and tenant-level isolation across infrastructure and application layers.

Is WorkLLM suitable for regulated or high-compliance environments?

Yes. WorkLLM is designed with strong isolation, auditability, and access controls that support regulated and high-compliance use cases.

How can our security team get more information?

Security and compliance questions can be directed to info@workllm.io, and we’re happy to support security reviews or questionnaires.

Do you offer on-premise deployment?

Yes. WorkLLM can be deployed in our managed cloud, your private VPC, or fully on-premise depending on your security and compliance requirements.

Can WorkLLM be deployed in our own infrastructure?

Yes. We support dedicated deployments inside customer-controlled environments for organizations with strict regulatory or data residency requirements.

Happy Customers

Customer satisfaction is our major goal. See what our customers are saying about us.

“Vivamus sagittis lacus vel augue laoreet rutrum faucibus dolor auctor. Vestibulum id ligula porta felis euismod semper. Cras justo odio dapibus facilisis sociis natoque penatibus.”

Coriss Ambady

Financial Analyst ABC.com

“Vivamus sagittis lacus vel augue laoreet rutrum faucibus dolor auctor. Vestibulum id ligula porta felis euismod semper. Cras justo odio dapibus facilisis sociis natoque penatibus.”

Cory Zamora

Marketing Specialist ABC.com

“Vivamus sagittis lacus vel augue laoreet rutrum faucibus dolor auctor. Vestibulum id ligula porta felis euismod semper. Cras justo odio dapibus facilisis sociis natoque penatibus.”

Nikolas Brooten

Sales Specialist Financial Analyst ABC.com

“Vivamus sagittis lacus vel augue laoreet rutrum faucibus dolor auctor. Vestibulum id ligula porta felis euismod semper. Cras justo odio dapibus facilisis sociis natoque penatibus.”

Coriss Ambady

Financial Analyst Financial Analyst ABC.com

“Vivamus sagittis lacus vel augue laoreet rutrum faucibus dolor auctor. Vestibulum id ligula porta felis euismod semper. Cras justo odio dapibus facilisis sociis natoque penatibus.”

Jackie Sanders

Investment Planner Financial Analyst ABC.com

“Vivamus sagittis lacus vel augue laoreet rutrum faucibus dolor auctor. Vestibulum id ligula porta felis euismod semper. Cras justo odio dapibus facilisis sociis natoque penatibus.”

Laura Widerski

Sales Specialist Financial Analyst ABC.com