Most AI systems today are powerful, but forgetful.

They answer well in the moment.

They summarize quickly.

They generate impressive output.

But when a conversation ends, the intelligence often disappears with it.

For teams, this is a serious limitation. Work is not a single prompt. It unfolds across conversations, projects, roles, and time. If AI cannot retain context across those layers, teams are forced to repeat themselves.

That is why we designed our memory architecture differently.

Why Memory Matters More Than Model Power

Large language models are getting better every year. Speed and accuracy continue to improve. But model capability alone does not solve the real problem teams face.

Teams struggle with:

- repeated explanations

- lost decisions

- fragmented discussions

- context that does not carry forward

According to research from Atlassian, knowledge workers spend a significant portion of their time searching for information or rebuilding context. The issue is not lack of intelligence. It is lack of structured memory.

If AI is going to support teams effectively, it must remember in layers.

Memory Is Not One Thing

Most AI tools treat memory as either on or off. Either the system remembers previous messages, or it does not.

Real work is more nuanced than that.

Context exists at different levels:

- A single conversation

- A specific task or workstream

- A broader project

- An individual’s preferences

- Organizational knowledge

Our architecture reflects this reality.

The Five Layers of Memory

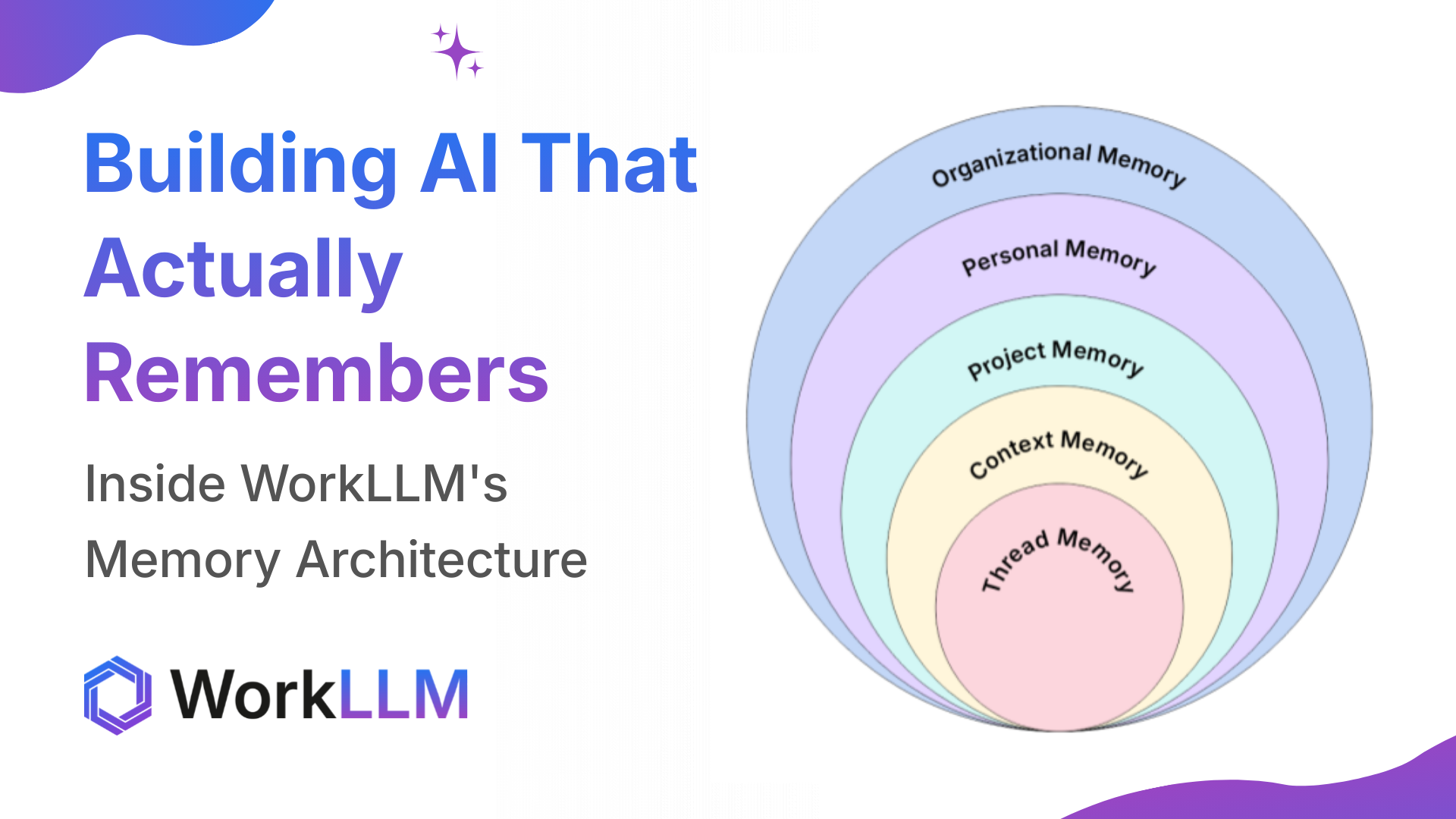

The diagram above illustrates how we structure memory from the most specific level to the most expansive.

| Memory Layer | Scope | What It Remembers | Why It Matters |

|---|---|---|---|

| Thread Memory | A single conversation | Messages, decisions, assumptions, and clarifications within one discussion | Maintains continuity so conversations do not reset or repeat. Ensures responses stay grounded in what has already been discussed. |

| Context Memory | A specific unit of work or task | Context tied exclusively to a particular initiative, topic, or workflow | Keeps responses precise and relevant to the current work without mixing unrelated discussions. |

| Project Memory | A broader project across conversations | Conversations, documents, decisions, and discussions connected to one project | Unifies work across meetings and tools so everyone operates from a shared baseline. Reduces repeated explanations. |

| Personal Memory | An individual user across interactions | Preferences, role, responsibilities, and working style | Personalizes AI responses and adapts output based on how each person works. Improves usability and efficiency. |

| Organizational Memory | Company-wide | Structure, priorities, institutional decisions, and long-term plans | Enables AI to answer company-level questions with context. Preserves institutional knowledge over time. |

Why Layered Memory Changes Team Performance

When memory is layered, intelligence compounds.

- Decisions do not disappear.

- Context does not reset.

- New team members ramp faster.

- Repeated explanations decrease.

Instead of treating every interaction as new, AI builds on what the team already knows.

Research from MIT Sloan has consistently shown that shared understanding and decision continuity are critical to high-performing teams. Layered memory supports exactly that.

From Conversation to Continuity

Most AI systems optimize for answers. We optimize for continuity. Continuity means that what your team discusses today still informs your work next week, next month, and next quarter.

That is the difference between a smart assistant and a system that strengthens how teams think and operate over time.

Memory is not a feature. It is the infrastructure that makes team-level intelligence possible.

Author Details

Ankit Sharma is a Full Stack Software Engineer specializing in scalable backend systems, AI-powered applications, and multi-tenant architectures. He has experience building high-performance platforms using Node.js, Next.js, PostgreSQL, MongoDB, AWS, and modern JavaScript technologies.